Assignment

Library

Search.

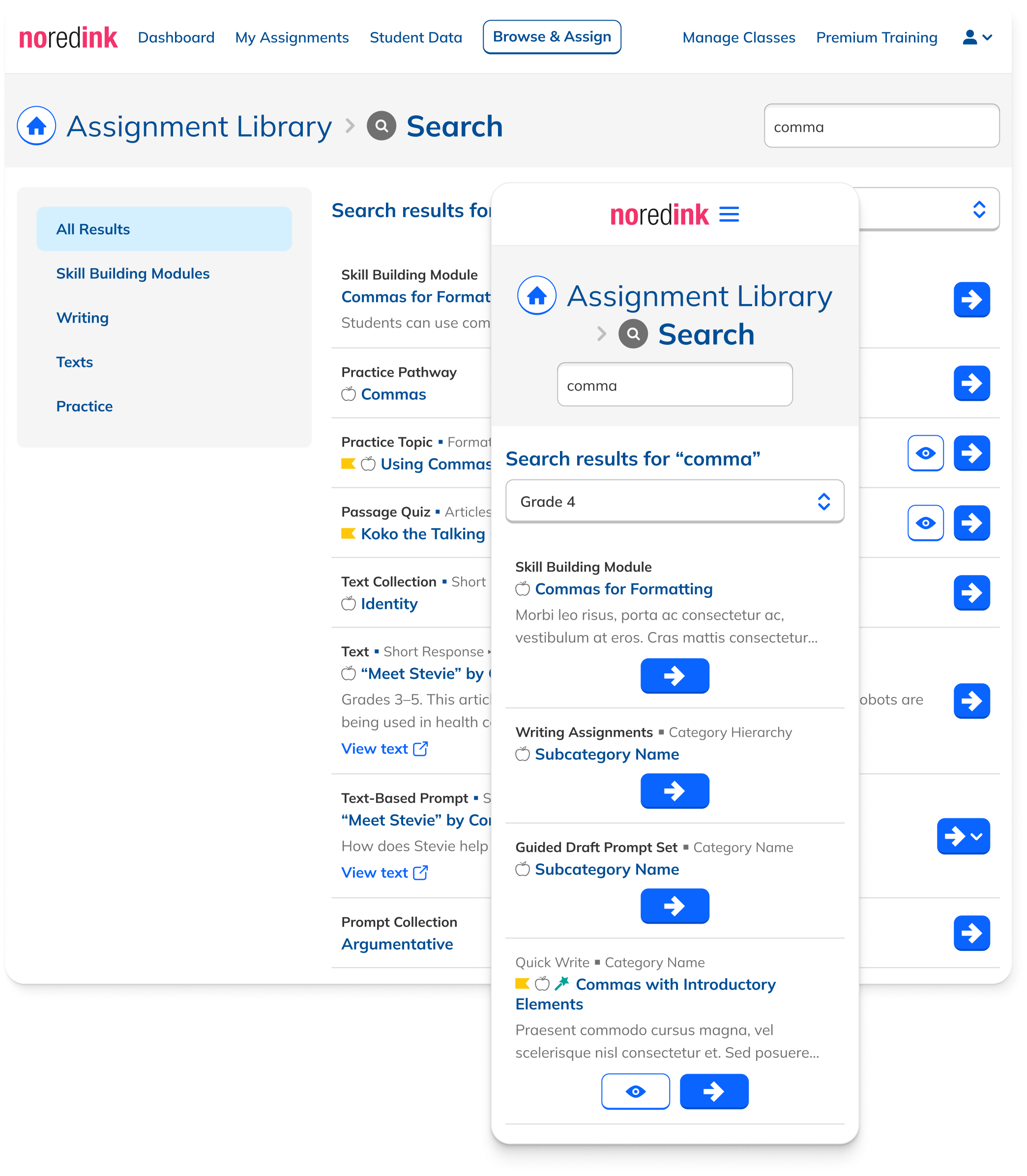

How rebuilding search from the ground up surfaced writing content, shifted teacher behavior, and drove a 23% increase in adoption.

Ben Dansby · Product Design

Search was failing teachers.

NoRedInk's Assignment Library is vast, with thousands of pieces of content. Search accounted for 21% of all Assignment Library sessions, making it one of the most important paths to content discovery.

But only 6% of Search sessions converted to writing assignments. Teachers searching for grammar terms weren't being shown relevant writing assignments even when they existed. Many assumed we didn't have what they needed.

This wasn't just a UX problem. NoRedInk's company goal was to be seen as more than a grammar tool. Every bad search result was evidence to the contrary.

Results were unweighted and always displayed alphabetically

Writing content appeared last or not at all

Individual writing prompts were not indexed, making them invisible to Search

Long, unsortable result lists forced teachers to sift through irrelevant items

Search took 5–10 seconds due to frontend execution

Safari users couldn't use Search at all due to browser incompatibility

A metric and a mantra.

The overarching metric: increase WACTS (writing and critical thinking skill assignments) created per teacher. This was a company OKR, and Search was one of the clearest levers to pull.

Secondary metrics included writing conversion per search session, overall assignment conversion, and Search adoption among Assignment Library users.

"Show me the most relevant, highest-leverage content first."

Our qualitative north star

The current experience violated this routinely. Fixing it required changes at the engineering, data, IA, and UI layers simultaneously.

More WACTS assigned per teacher

Primary company metric: writing and critical thinking assignments.

Higher writing conversion per search

Teachers who search should find writing content and act on it.

Search adoption among library users

More teachers choosing Search as their path into the library.

Four layers of improvement.

Fixing Search wasn't a UI problem alone. Working with a PM, curriculum specialist, and engineer, we tackled it across four layers, each one unlocking the next.

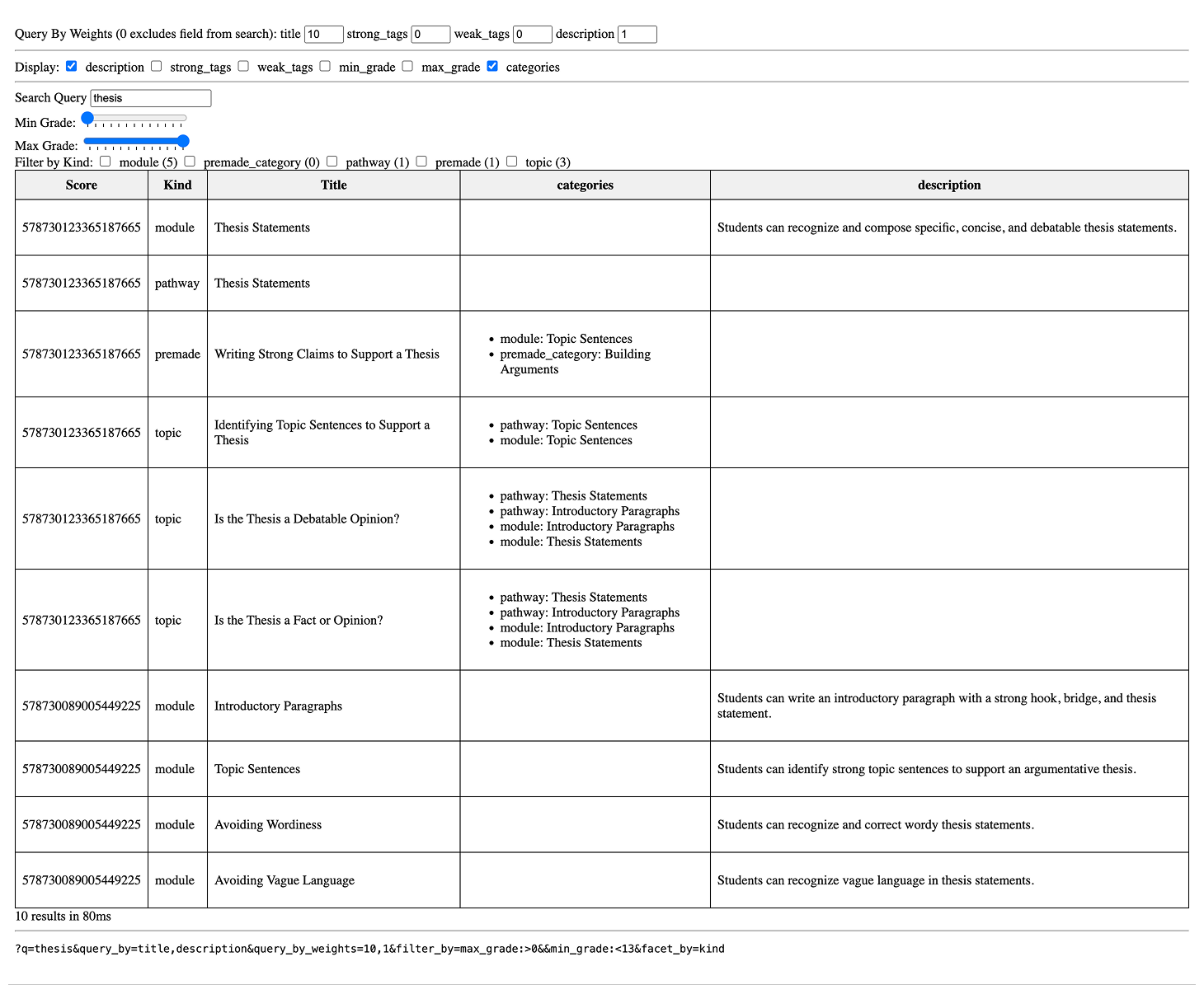

Backend migration

Moved from a slow frontend-based system to a backend search engine. Eliminated performance issues, enabled full-library indexing, and unlocked relevance tuning.

Tiered weighting framework

High-leverage writing modules received additional weight. Synonym groups and manual overrides honored teacher intent. Exact matches always outranked fuzzy ones.

Full writing prompt indexing

For the first time, individual writing prompts were indexed and returned as results, opening vast new swathes of writing content to search users.

Redesigned UI

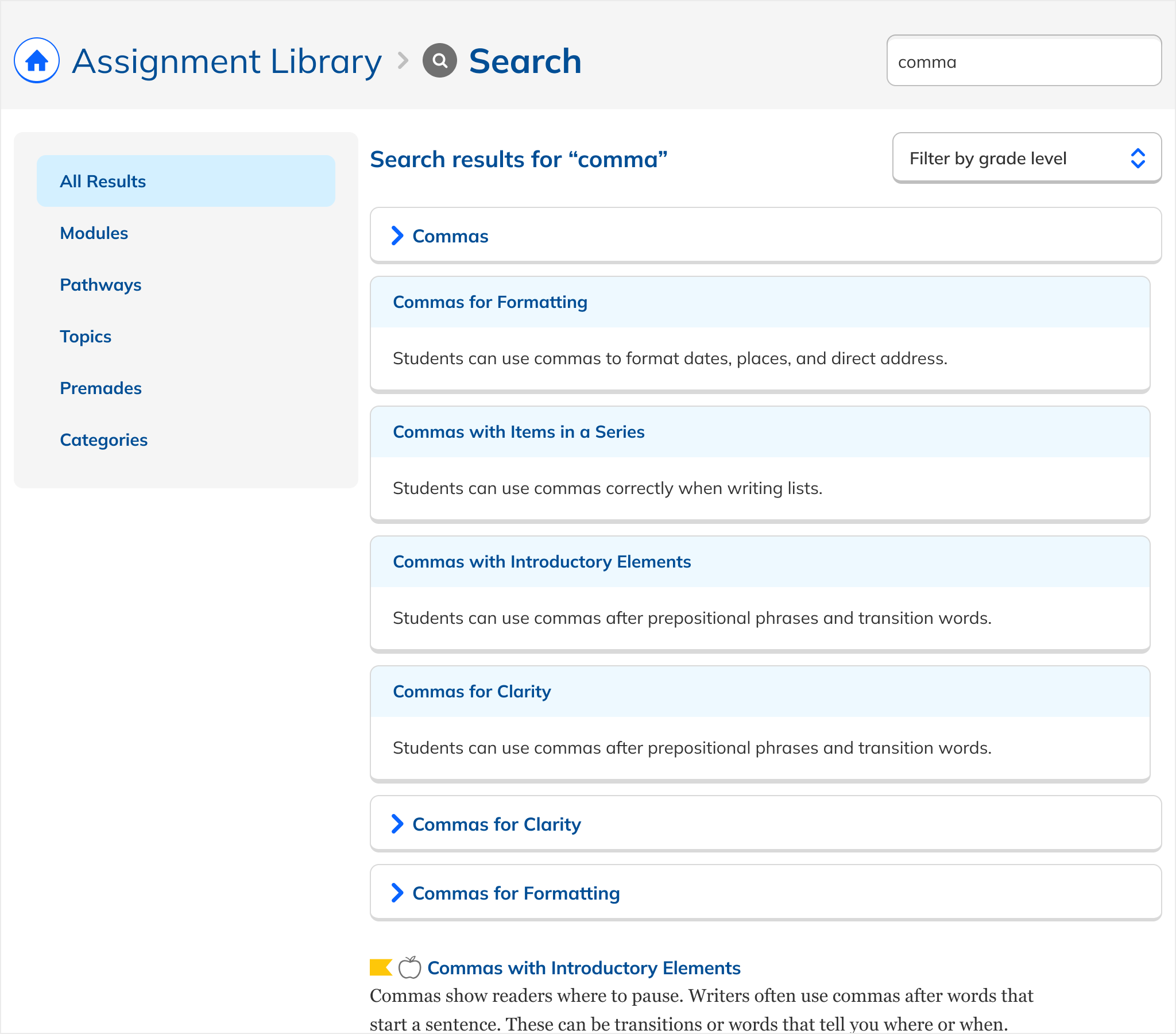

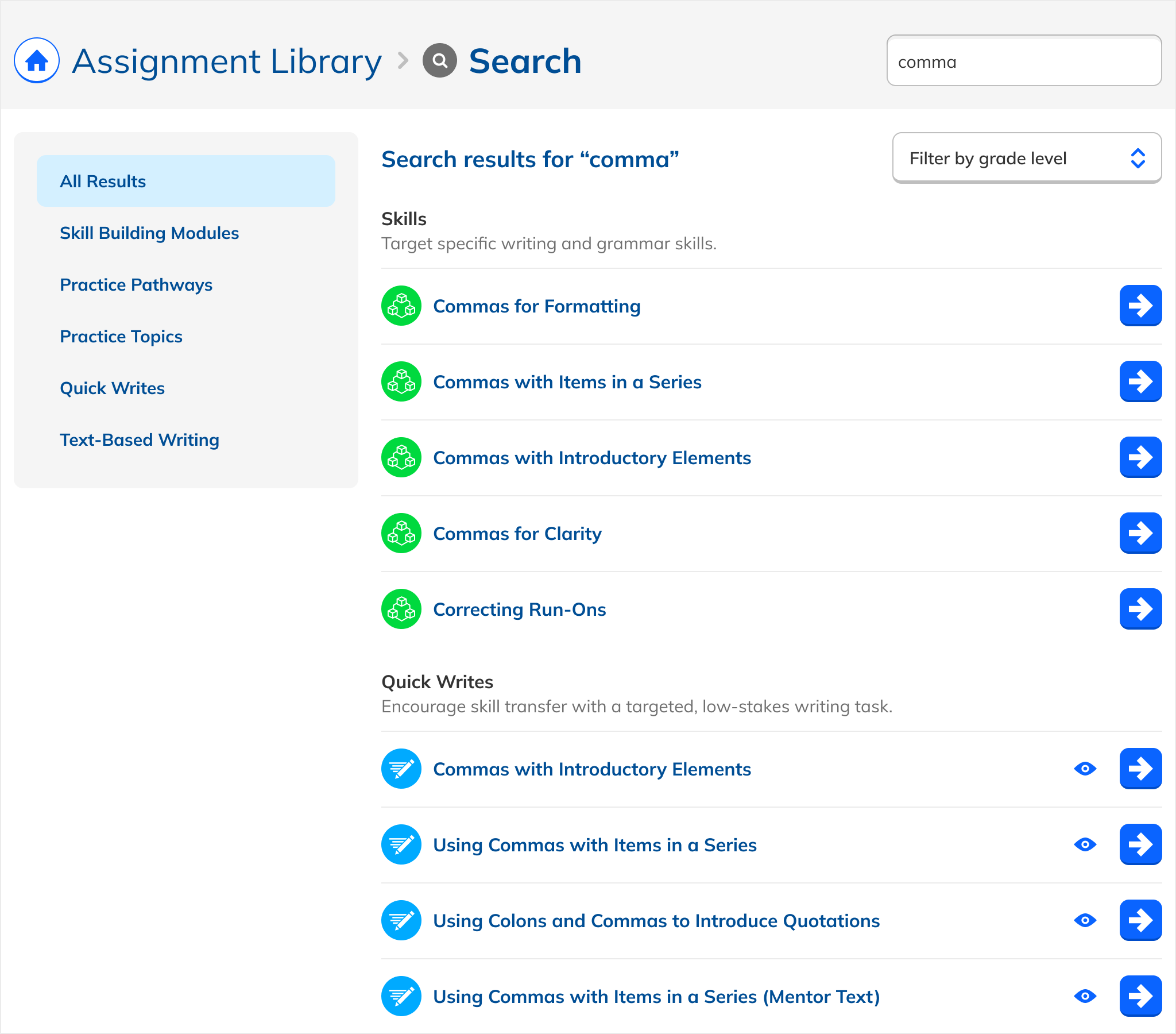

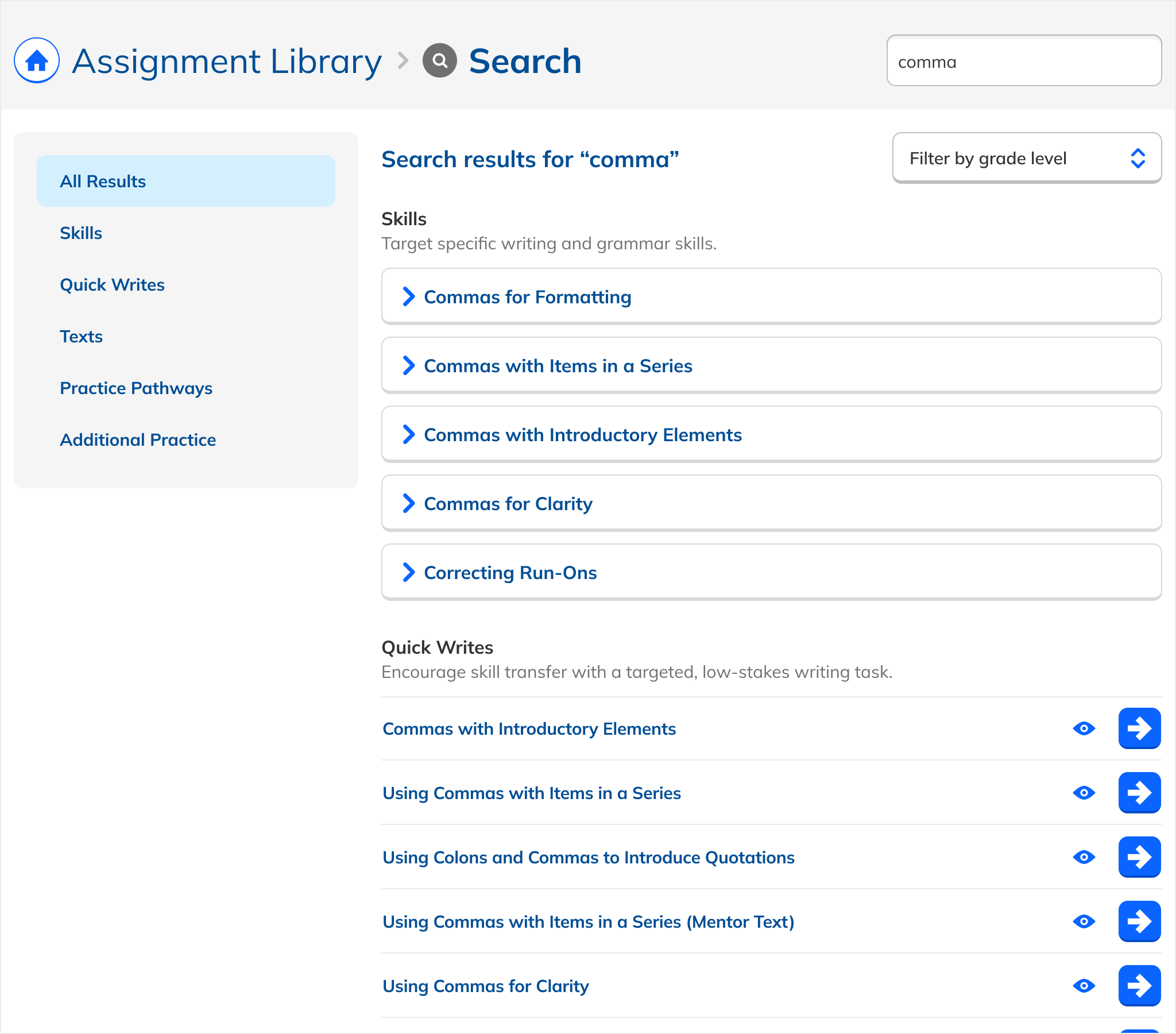

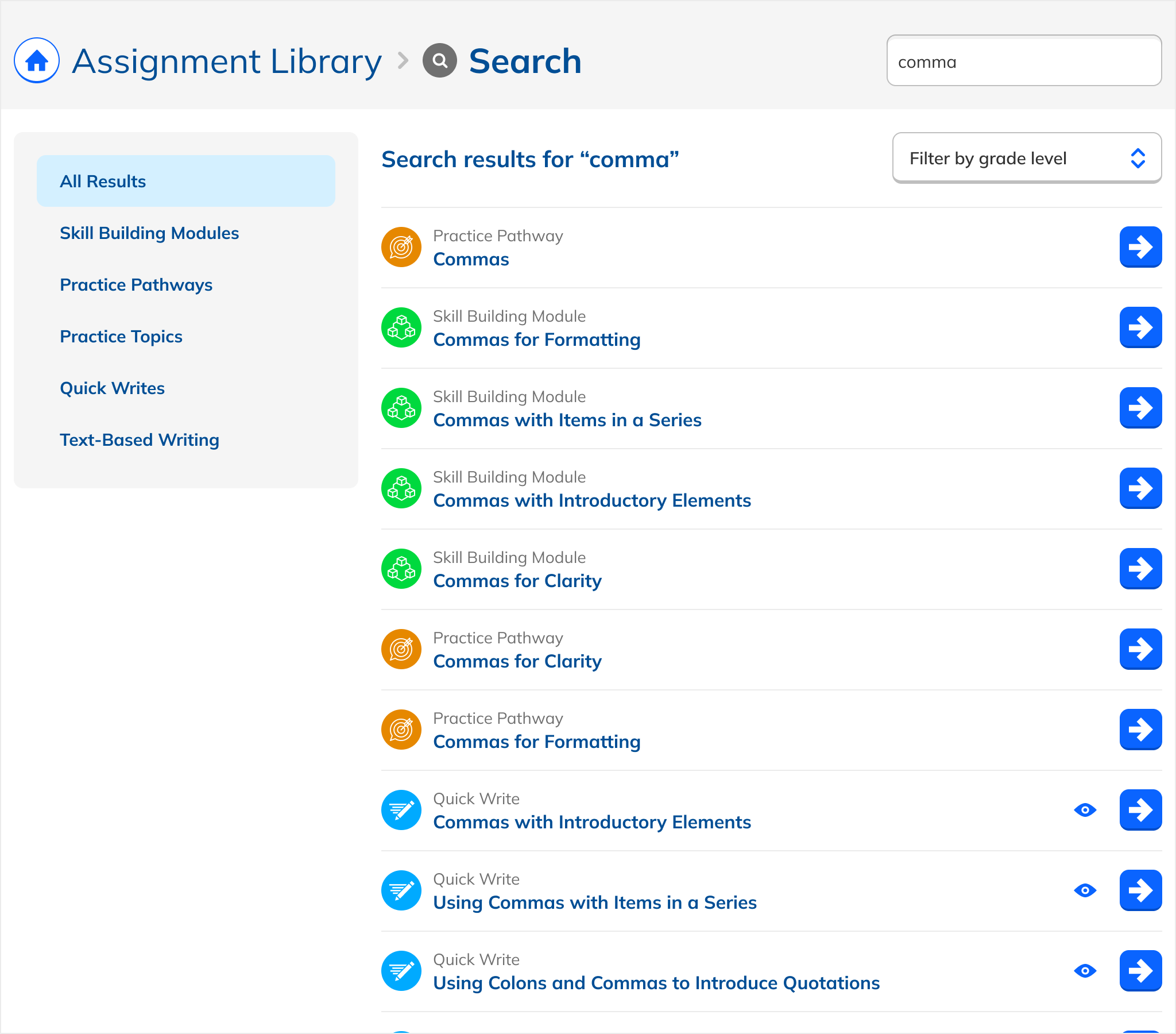

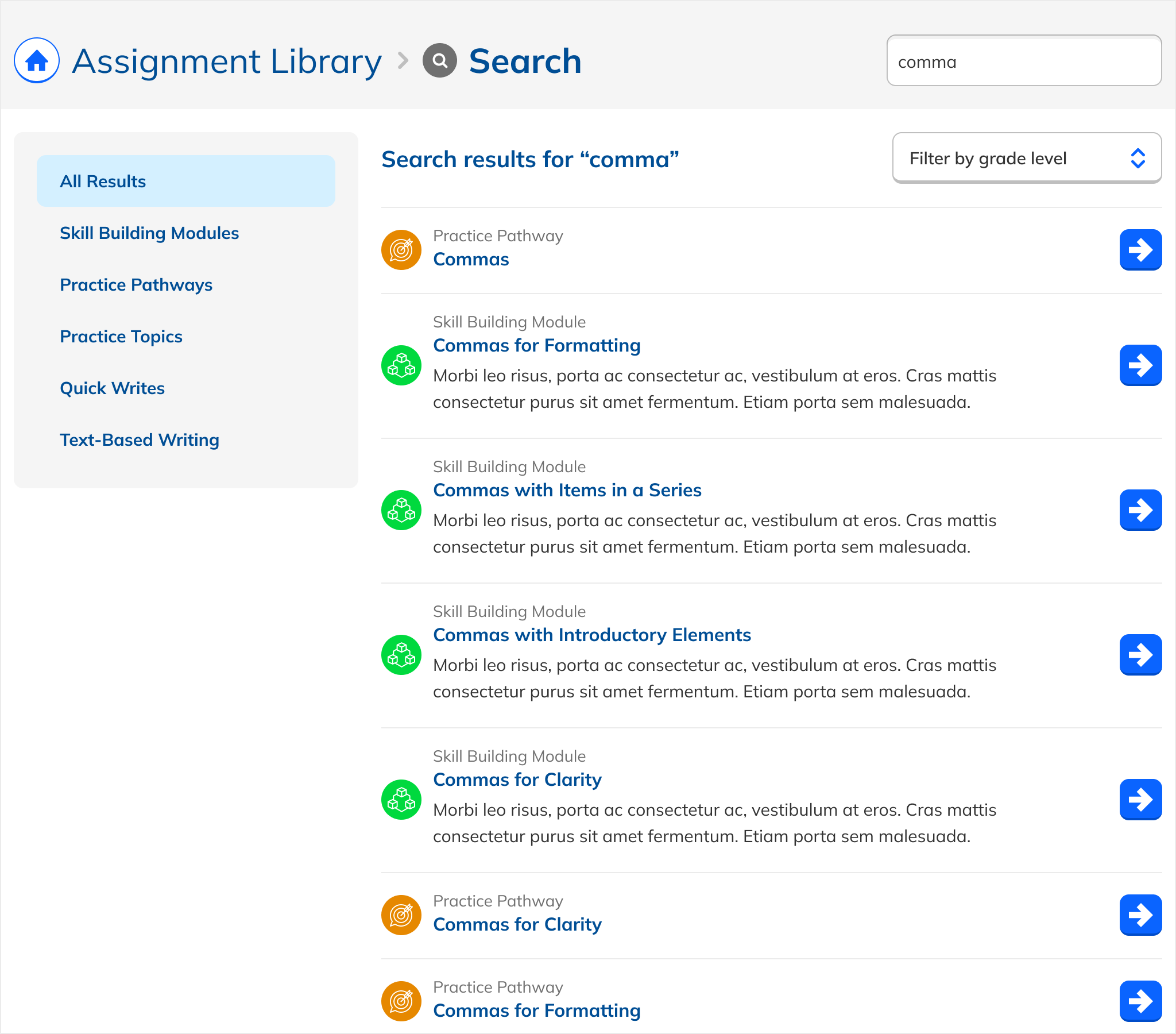

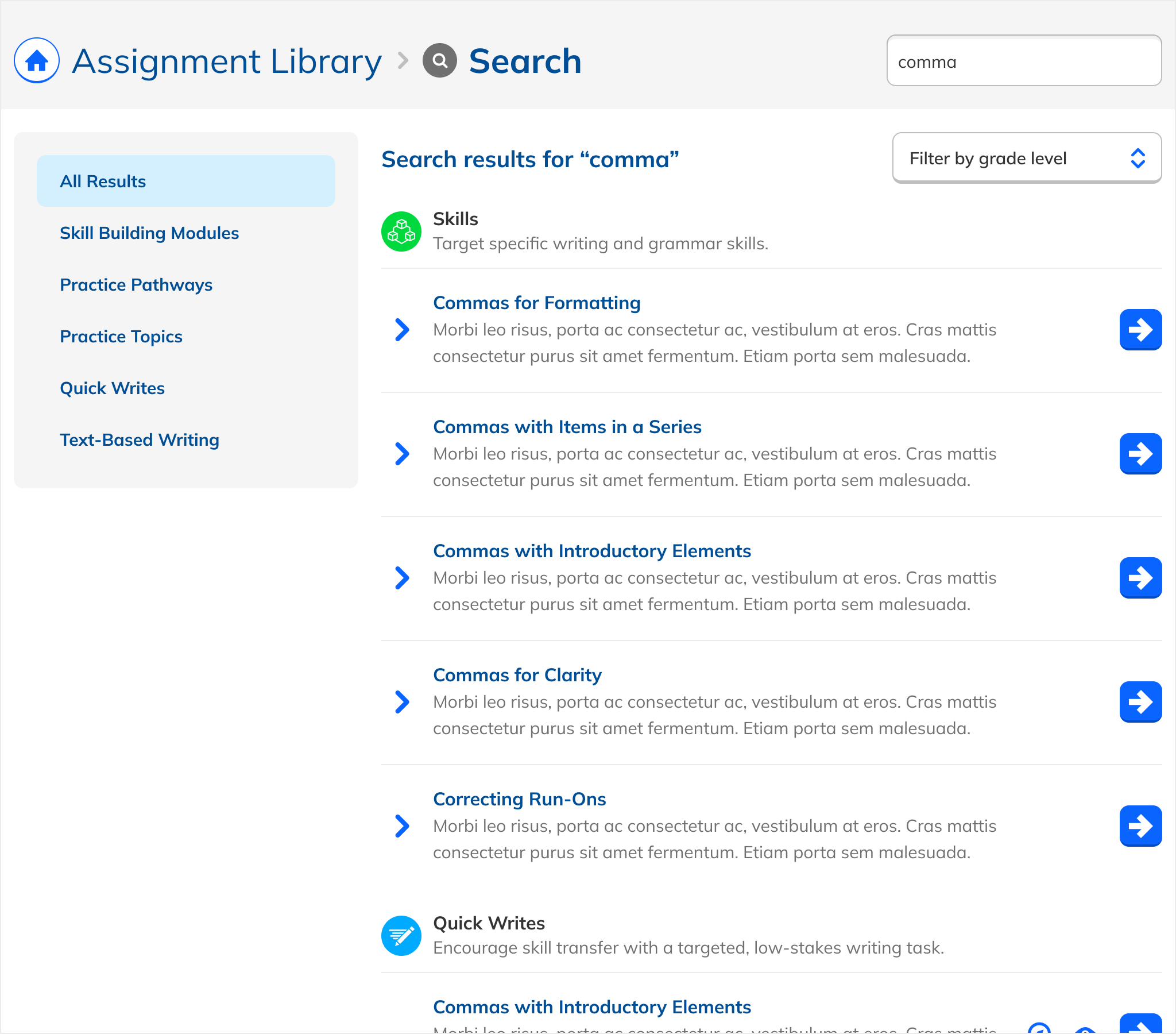

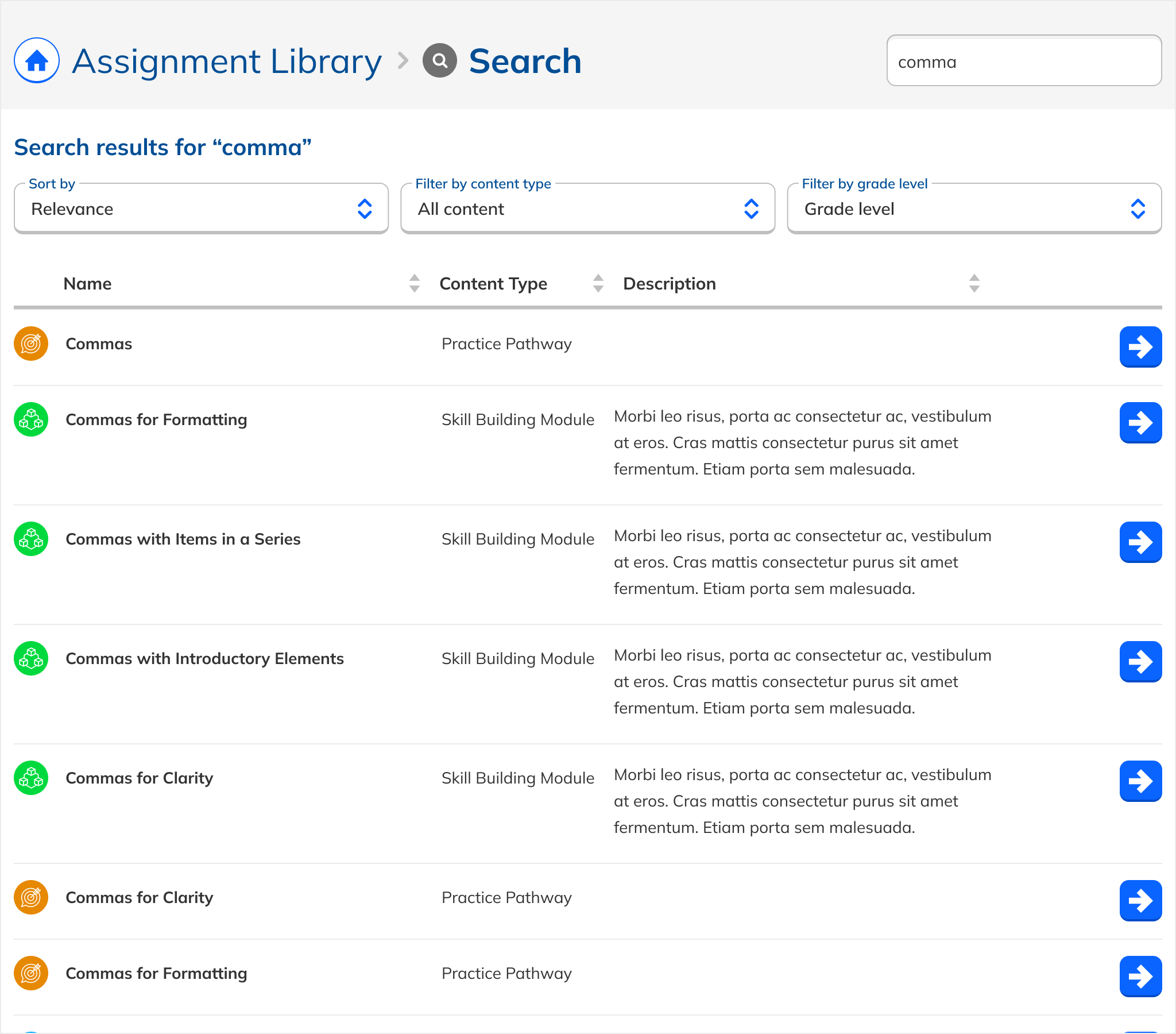

A simple, clearly labeled, filterable results list. Low learning curve, uniform formatting, single consistent action per result.

Heterogeneous content, unified experience.

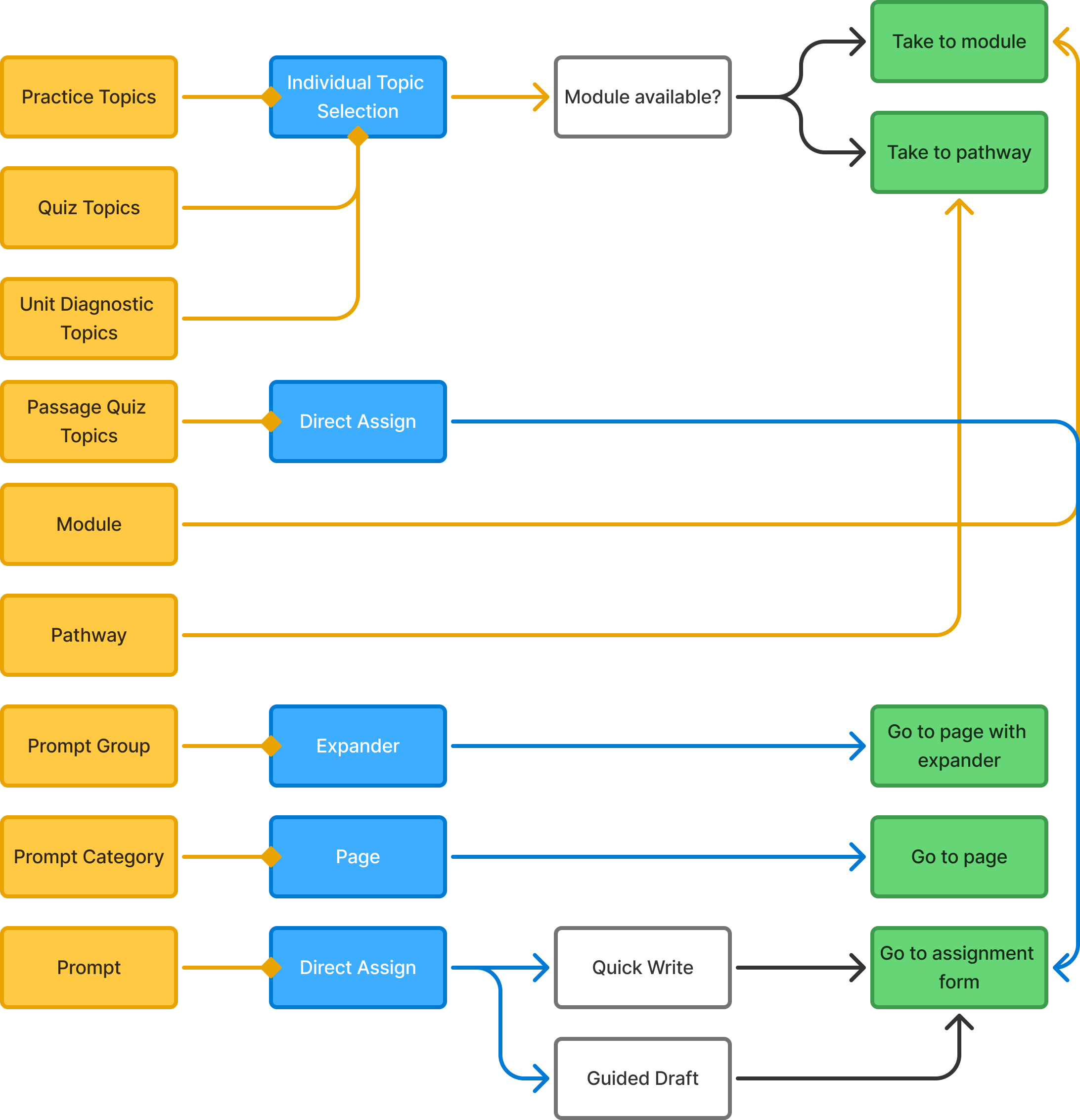

A major design challenge: the Assignment Library contains fundamentally different types of content with different behaviors. Practice Topics are checkboxes combined into assignments. Writing Prompts are standalone flows. Passage Quizzes can only be assigned as Quizzes. Modules and Pathways group related content.

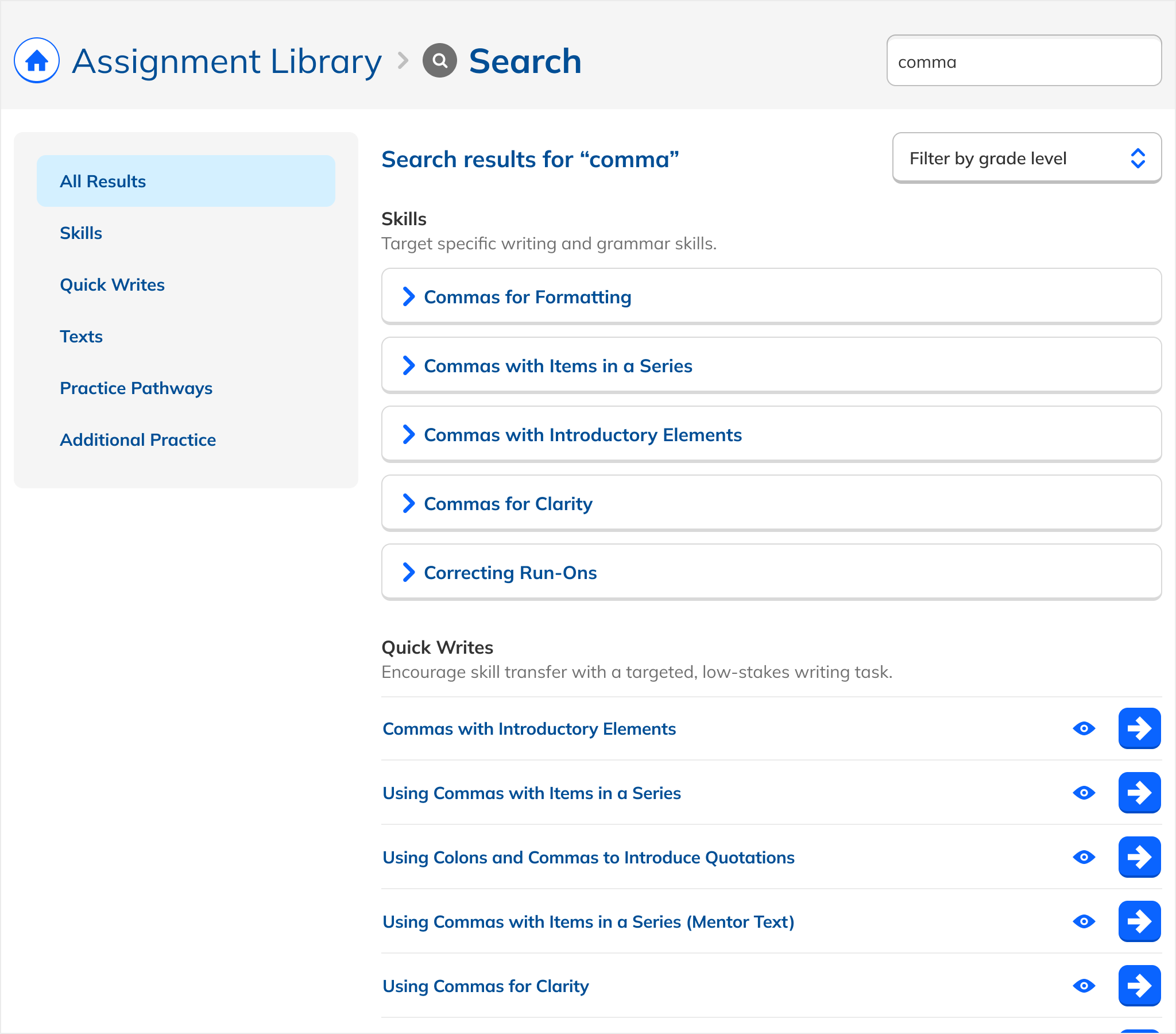

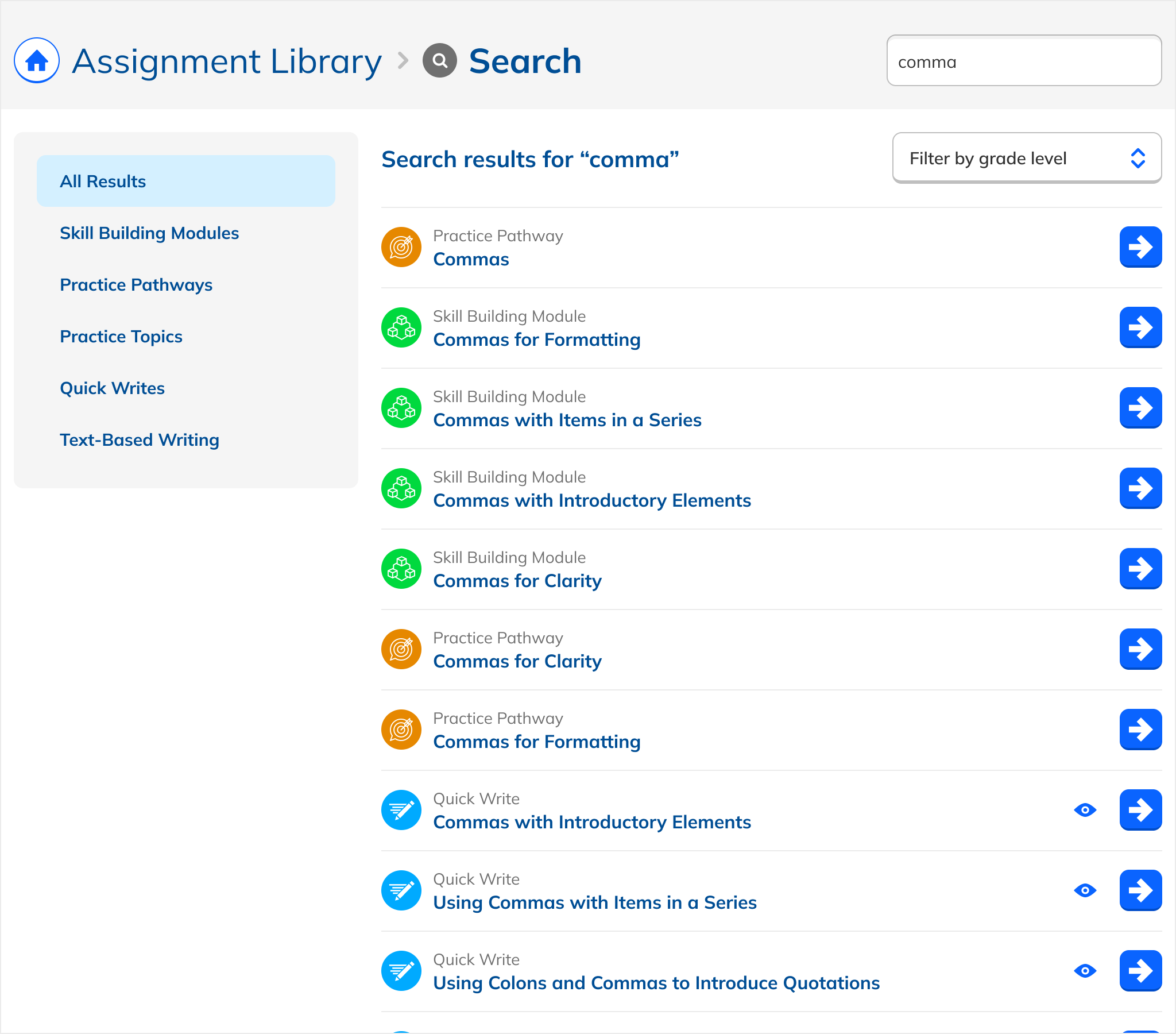

Early iterations reflected this native variety: each type looked and functioned as it did in the library. But this created cognitive load: users had to learn multiple interaction patterns just to act on search results.

The solution: uniform presentation across all types. Every result appeared in the same format, with a single action that took the user to a dedicated page. The emphasis shifted from content logistics to content itself.

Nearly 30 iterations.

The design evolved in tight coupling with a live prototype, as relevance weights and tags were adjusted in parallel. Early iterations tried iconography, grouped sections, grid layouts, and varying amounts of metadata. Each path eventually led back to the same insight: the simpler, the better.

Promote writing. Don't inflate it.

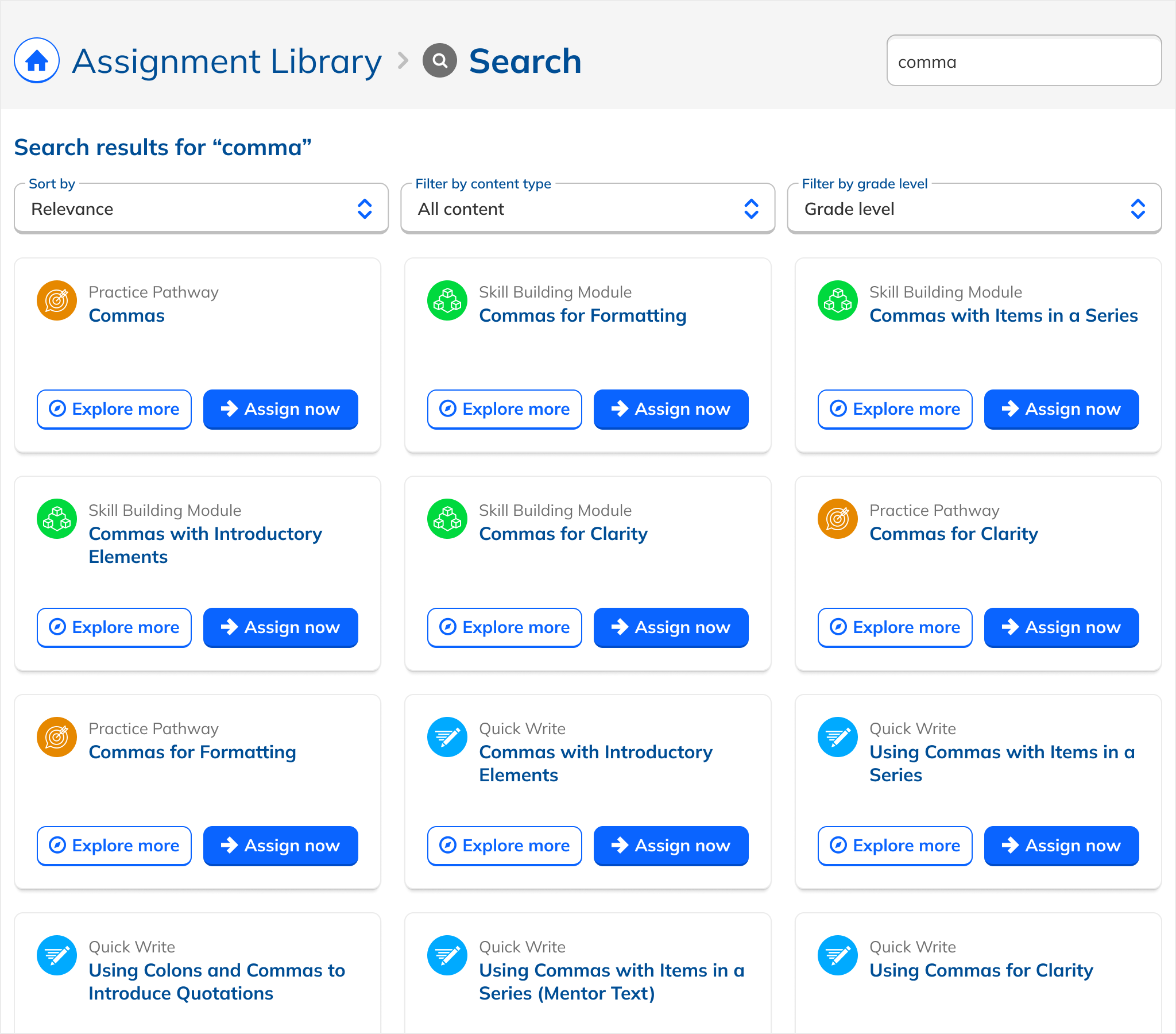

The most debated question: should writing results be artificially surfaced to give them greater visual prominence? Expanding writing result rows, adding hero treatments, pulling them above grammar results regardless of relevance?

We went back and forth. After design and implementation iteration, we came down firmly on the side of restraint.

Presenting the most relevant results remained our north star. Manipulating the order or size of results based on content type would undermine teacher trust, and that trust was the whole point.

Serve business goals through proper weighting and tagging, not by skewing the visual hierarchy. The algorithm surfaces writing; the design just gets out of the way.

Expanded writing rows

Tried hero-sized cards for writing results. Felt promotional, not functional. Created visual noise without improving relevance.

Writing-first ordering regardless of query

A teacher searching for "subject-verb agreement" should see grammar first. Forcing writing up top punishes relevance.

Uniform list, relevance-ranked

Every result looks the same. Writing appears high when it's truly relevant. Trust the weighting. Don't fake it.

Staged, validated, and thorough.

Search touched engineering, information architecture, content data, and UI simultaneously. The release process was structured to validate each layer before opening it up.

Validated 20+ search archetypes

Partnered with Curriculum and Engineering to ensure relevant results across a broad range of query types.

Imported tag data and implemented overrides

Manual overrides for edge cases where the algorithm needed a nudge to honor teacher intent.

Internal dogfooding

Ran the new experience with internal teams before any external exposure.

10% rollout, then wide release

Limited release gave us real data before committing fully. Wide release followed once results looked promising.

Engineering

Backend migration, search API, relevance tuning infrastructure.

Curriculum

Content tagging, synonym groups, and validating search archetype results.

Product & Design

UI iteration, architecture decisions, and tracking metric outcomes post-launch.

Teachers who searched, assigned more writing.

teachers who used Search were twice as likely to assign writing vs. those who didn't, representing a 13.5% year-over-year increase

WACTS per power user (searched 10+ times) vs. 2.32 baseline; the heaviest Search users showed the clearest behavior shift

Search adoption, up from 34% to 42% of Assignment Library users, the strongest secondary metric movement

Meet the user where they are.

This project transformed a slow, often misleading search experience into a fast, writing-forward tool, showing that technical and experiential improvements working together can meaningfully shift teacher behavior.

But one feature can't shift user attitudes entirely. Teachers still think of NoRedInk as a grammar tool, and the adoption lift came mostly from more teachers using Search at all, a signal we should have promoted the new experience more explicitly at launch.

Technical + UX = behavior shift

Neither the backend work nor the UI work alone would have moved the needle. The combination was the unlock.

Relevance over promotion

Trust the algorithm. Artificially inflating writing results would have undermined teacher trust in the tool.

Launch is part of the design

Adoption grew primarily from more teachers using Search at all. Promoting the change would have accelerated the gains.

Simplicity wins

Thirty iterations led back to a flat, uniform list. Less metadata, fewer interaction patterns, more focus on content.